AI Can't Write Without Us

Notes on Artificial Intelligence Before I Start to Ignore It Entirely, Part 2

Part 2: Impersonation

In which I am fooled by AI, experience hallucinatory disappearances, and realize I will never be able to ignore AI entirely.

In my previous post “AI Can’t Read,” I focused on factual errors in Google’s AI-assisted “Overviews.” In this post want to look at AI “impersonation,” another kind of error.

I’ve put a visual deep-fake (“null-fake” is perhaps a better term) at the top to quickly illustrate an uncanny and in my opinion unethical use of AI to impersonate a human being. Unethical even when the apparent human being does not exist.

My primary interest is impersonation not by image but by writing, by implied authorship. We will encounter an artificial author, some artificial scholars, a whole collection of artificial essays, and we will consider the spectral ‘poet’ behind AI-generated poetry.

The narrative of one personal experience will cover this whole range of artificial entities. My anecdote is about falling for an AI-generated essay—an essay about AI writing poetry, no less.

My title, “AI Can’t Write Without Us,” acknowledges that there is to some extent always a human being, or many of us, behind AI-generated writing. We know that when AI “writes” it draws upon the “large language” that goes into Large Language Models. That language is the human-generated language of its original training data and/or any task-specific added or constrained data. “All my old notebooks.” “Just Shakespeare.”

Recently, AI-generated language has itself become a part of this training data, which causes its own problems.

More to my point, and regardless of training data, human beings are the ones that ask AI to write. We input the prompts, we choose additional training text, we edit the results, we revise the prompts, we edit again, we sign off on the end result. Can we call this “our” writing? Possibly, if it’s a work email. But if it’s an essay? If it’s poetry?

About a month ago I found myself reading a lot of online commentary on Rupi Kaur. It became clear that there was a peculiar synergy between her very popular short poems, known for their simplicity and thematic focus, and the emergence of generative AI chatbots.

The first instance I noticed was a fun and generous article by Bot-o-Matic author Ian Gabriel Loisel. He describes his use of ChatGPT-2—already out of date in 2023 but chosen for its “wonkiness”—to generate fake, failed and amusing Rupi Kaur-ish poems.

Oh make me come out

and see you

and let me feel your face

flourish.

Another accessible and entertaining instance of a human—a group of young humans actually—being creative at the same intersection is the YT video called “can A.I. actually replace writers? (no, but watch it try).” Here Rupi Kaur and the Instapoetry phenomenon are subjects of an experiment to see if AI can do better, given the dual assumptions that her sort of poetry is the most likely to be bested and that AI is “being marketed as a tool to replace everything.” As host and creator Josh Mifsud puts it: “what if I weaponized my two least favorite things against each other?”

I should have been content with these clever excursions, and moved on to non-AI topics. But I was drawn in by an essay that took a more serious approach: “AI-generated Poetry Bots—Do They Mimic Rupi Kaur Or Just Recombine Trauma Keywords.” It appeared in an unusual location: a “product insight” essay on the Alibaba consumer website.

Alibaba is the “Chinese Amazon” of online retail, comparable with its American competitor in size and business diversification. It is a Cloud computing giant as well, and has its own AI.

Despite being a “product insight” the essay makes no mention of any specific product, whether chatbot or book of poems. Instead it presents what I found to be a balanced critical analysis of the possibility of AI poetry:

Kaur’s diction is deliberately accessible, her imagery recurrent, and her emotional valence highly predictable …. those very strengths make her voice an ideal training scaffold for algorithms designed to optimize for engagement, not insight.

The ostensible author is Clara Davis, pictured above. The name and image are at the top and bottom of the text, though no words directly assert that “she” is the author. Her bio begins “Family life is full of discovery,” but contains no biography or credentials.

For authoritative support the essay quotes an academic-sounding source:

“The danger isn’t that AI writes bad poetry. It’s that it trains us to accept shallow language as sufficient for deep feeling. When ‘root’ and ‘bloom’ become universal stand-ins for ancestral connection and growth, we lose the vocabulary to describe how my grandmother’s hands kneaded dough while telling stories of fleeing Lahore in ’47—or how my daughter names that same resilience ‘resistance.’ Language isn’t neutral infrastructure. It’s memory, history, and refusal—compressed into syntax. Algorithms compress it further, into probability.”

— Dr. Lena Chen, Computational Linguist & Poet, author of Code and Contour: Language, Power, and the Limits of AI

The championing of the specific suggests a human agenda. But there is also a perfect continuity of style and substance between the author’s main text and quoted expert’s text.

It took me a while to realize it, but the whole essay is a hallucination. Or, since I agree with Ewan Morrison that “hallucination” is too anthropomorphic a word to apply to the “mind” of AI, let’s say that reading the article became for my mind a hallucinatory experience.

The profile pic for the article did not lead to any Clara Davis via image search. Google Lens suggests many near-misses, but none of them is the same face. You can tell—if you’re a sighted human.

Lens does find four exact matches. Two are at sites in unfamiliar languages (not Chinese) that my browser hesitates to visit. Two were invalid URLs. Bing returns only non-photographic “woman with brown hair” images.

I had to conclude that “Clara Davis” is one of those null-fake photos that is altered or hybridized to supply a convincing human face without using an actual human face.

Dr. Lena Chen the “Computational Linguist & Poet” does not exist either. Nor is there a book called Code and Contour: Language, Power, and the Limits of AI.

Realizing you’ve fallen for an AI impersonation is a sensation like falling for a scam: less alarming maybe, but more uncanny. There’s no human criminal at work, at least not in the usual sense, and no monetary loss. But there is embarrassment at being duped, and the spooky experience of having imagined Others—fellow humans whose “writing” I actually admired—suddenly become non-persons.

In this case, AI has apparently “written” a critique of AI writing, with the writing of poetry as the exemplar. The essay conveys a distinct skepticism regarding AI’s ability to succeed in any meaningful way. It’s ironic. Could it be intentionally ironic? Or is that layer only visible to a reader who realizes they have been fooled?

Equally ironic, and with a similar circularity, is the fact that there is another Alibaba ‘product insight’ essay entitled “How To Detect AI Generated Academic Citations.” It contains a quote from Dr. Lena Petrova, Senior Bibliometric Analyst, University of Manchester Library.

You guessed it—there’s no such person or position at University of Manchester Library.

Dr. Petrova’s advice for spotting fakes? “The most reliable signal isn’t a missing DOI [Digital Object Identifier] or journal—it’s the silence where there should be resonance. Real scholars leave traces….”

As I was marveling at these ironies and beginning to deal with the fact that a very topical and useful essay on the limits of AI’s ability to write poetry was itself written by AI, there was a real-time twist to the plot of this anecdote.

I was adding the web address for the essay to a link above. I wanted my readers to be able to read “AI-generated Poetry Bots—Do They Mimic Rupi Kaur Or Just Recombine Trauma Keywords” for themselves.

I noticed that it went to a 404. The web page I accessed in the morning did not exist in the afternoon. The essay had disappeared.

I checked the Wayback Machine. The page was too recent to have been captured. I realized with some anxiety that the only version now available was the page currently open on my laptop. I added the URL to the link anyway. Who knows, maybe it will be revived.

I was feeling a bit paranoid. For the record, here’s a screenshot of the opening paragraph. (Apologies for the image quality.)

Note the granularity of the journalistic set-up. I cannot confirm “GriefVerse” ever existed, or that this Instagram viral moment ever occurred, but on the face of it, it’s credible.

In the paragraphs that follow, we get an equally impressive philosophical framing:

The question is ontological: What happens when systems trained on mass-digitized vulnerability learn to replicate the grammar of healing—without ever having felt pain, held grief, or sat with silence? To answer it, we must move beyond aesthetics and examine data lineage, linguistic patterning, cultural context, and the quiet violence of flattening lived experience into training tokens.

Can you see how I was initially intrigued, even inspired by what I was reading?

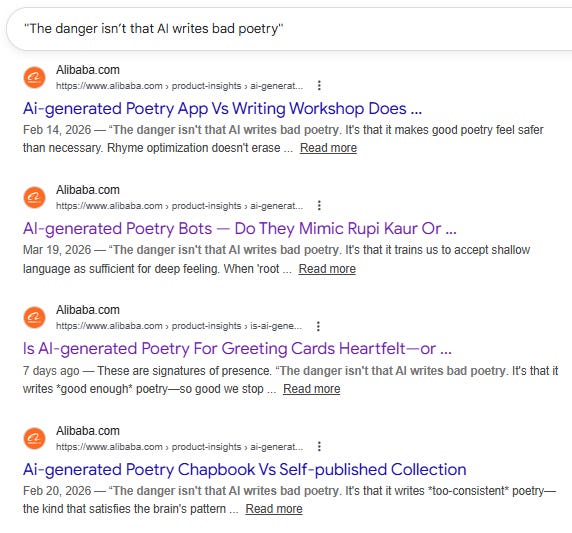

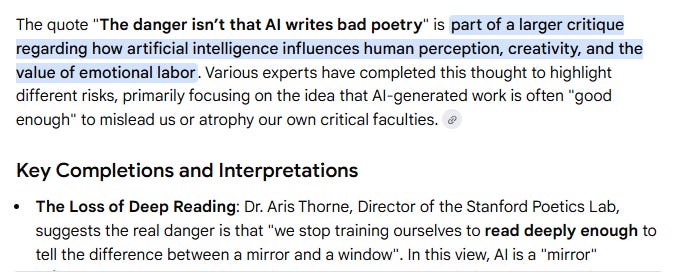

Further strangeness: once I realized I was dealing with an AI-generated essay, I started looking for the original sources of its content, in case there was detectable plagiarism involved and credit due to real authors. I searched for the phrase “The danger isn’t that AI writes bad poetry” from the “Dr. Chen” quote, hoping for precedents.

Here’s a screenshot of some exact matches. Note the varied but consistently cautioning nature of the messaging, and the apparent sensitivity to the nature of poetry:

I’d like to show all the hits for effect, but to save space I’ll list a prominent group among them—almost half the total—the ones alluding to poetry contest fraud:

Is AI-generated Poetry Winning Literary Contests Because … “The danger isn’t that AI writes bad poetry. It’s that we stop training ourselves to read deeply enough to tell the difference between a …

Is AI-generated Poetry Winning Literary Contests Because … “The danger isn’t that AI writes bad poetry. It’s that we begin measuring craft by what machines do well—precision, efficiency, coherence …

Is AI-generated Poetry Winning Literary Contests Because … “The danger isn’t that AI writes bad poetry. It’s that it writes competent poetry so efficiently it redefines the baseline for ‘acceptible …

Is AI-generated Poetry Winning Literary Contests Diluting …That distinction matters—not for the poem’s effect, but for what we choose to reward. The danger isn’t that AI writes bad poetry. It’s …

Is AI-generated Poetry Winning Literary Contests Because … “The danger isn’t that AI writes bad poetry. It’s that we begin mistaking technical novelty for moral or aesthetic courage. True craft …

Is AI-generated Poetry Winning Literary Contests—Or just… “The danger isn’t that AI writes bad poetry. It’s that it writes “competent” poetry so reliably that it lowers our threshold for what counts as meaningful …

Is AI-generated Poetry Winning Literary Contests Because ... “The danger isn’t that AI writes bad poetry. It’s that it writes *exactly* the kind of poetry our current systems are built to reward …

Is Ai-generated Poetry Winning Literary Contests—or Just ... “The danger isn’t that AI writes bad poetry. It’s that it writes *excellent mimicry*—so excellent it reveals how much of our aesthetic ...

Ai-generated Poetry Contests — Do Judges Score Based ... “The danger isn’t that AI writes ‘bad’ poetry. It’s that we’ll stop recognizing what makes poetry necessary: its capacity to unsettle ...

This was a bit overwhelming to discover. Twenty essays with the same phrase and very similar themes, each slightly re-purposed. Plus alarming indications of a rampant problem involving AI poems winning literary prizes.

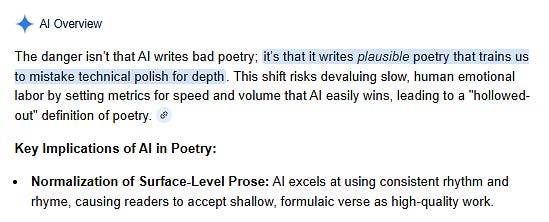

Google’s AI “Overview” offered its unsolicited summary of these search results. Thus, we have one AI summarizing another:

And the summary of the summary?

All twenty of these “2026 expert insights” were posted on the Alibaba website over a period of a few weeks in February and March. There are no other matches.

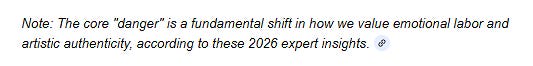

As for the repeated focus on AI poems winning prizes, this seems to be a bizarre multiple phantasm generated by AI’s own fabrications rather than actual news items. A Google search for such an AI win leads to only one poem, and searching that poem’s title loops back to nothing more than the Alibaba essays.

The Atlanta Review prize is real, but the 2023 winner was Kareen Tayyar, and I can find no trace of an AI scandal. The River Styx magazine contest is real, but their contest was not held in 2023.

Even though all the Alibaba AI poetry essays are now dead links and cannot be accessed, they continue to confuse other search engines than Google. I asked Bing “did an AI-generated poem win a poetry contest” and it answered:

Sounds a lot like “The Day the Sun Forgot Its Name,” right?

The two top hits supporting Bing’s answer are two of the Alibaba essays listed above. No doubt, if we could still read them, they would contain this title. If you in turn search this poem title on Google, you get a repeat of the hallucinatory summary above, with nothing but Alibaba essays for evidence.

For the record, the only legit instance I can find of an AI-generated poem winning a contest and being outed as bogus is the withdrawal of the winners roster in a 2024 Migrant Writers of Singapore poetry contest, where the rules had not specified that generative AI must not be used.

I took a break from writing and research at this point. I was bit drained and perplexed and wondering whether, despite my love of Extraliterary tangents, I was wasting my time and mental health on this one.

Finding “insights” in a fake essay on Alibaba’s English language retail website? An essay located under: Mother, Kids & Toys > Remote Control Toys > Toy Robots? What was I thinking? What was this radical instability of AI-generated text—this strobing of the gaslight—doing to my thinking?

Tangent within a tangent re “toys”: the commercial publisher of Rupi Kaur’s poetry is Andrews McMeel, an American company that publishes anthologies of comic strips, calendars, and related toys. (Wikipedia, my emphasis.)

Later, when I had quelled my paranoia, I tried searching again for “The danger isn’t that AI writes bad poetry.” Google continued to conjure all the same dead Alibaba links and nothing else. At time of writing a handful are still being found by the same search.

Fake experts from these AI essays continue to corrupt AI search summaries.

The ‘mirror versus window’ analogy was probably stolen from Shannon Vallor’s book The AI Mirror (2024).

Eight out of nine sources cited for this AI summary are dead Alibaba AI poetry essays. The lone legitimate source (and lone functional link) does not mention Dr. Aris Thorne.

Of course there’s no Dr. Aris Thorne at Stanford Poetics Lab—and, no surprise, it’s actually called Stanford Center for Poetics. You can be sure, though, that the name and the “lab” appear in the Alibaba essays.

I’m actually glad I can no longer read them to confirm. If I could, I might never get out of this surreal maze.

It was, however, comforting to find a whole Reddit thread on the ubiquitous phantom “Dr. Aris Thorne” and related simulacra—like Elias Thorne the disgraced but non-existent poetry prize cheat.

Wow. Hundreds upon hundreds of AI generated stories, podcasts, books on amazon, youtube videos, all about this Dr. Aris Thorne, sometimes Dr. Aris Thorne is a marine biologist, other times a neuroscientist, other times an actual doctor.

This Reddit comment is a year old. So we know the Alibaba essays were not the beginning of this cycle of interwoven impersonations, but a further elaboration.

For me these essays are, if ultimately trivial, a nonetheless painfully clear example of the problem that Cory Doctorow describes so vividly when he says:

AI is the asbestos we are shoveling into the walls of our society and our descendants will be digging it out for generations …

Part of the initial appeal for me of “AI-generated Poetry Bots—Do They Mimic Rupi Kaur Or Just Recombine Trauma Keywords” was its concise balance of acceptance and caution regarding AI writing. That very balance ought to have been my first clue. The ‘balance’ was really my own bias—which is against using AI for writing in general, and most certainly for poetry—reflected in confidently balanced rhetoric.

The title alone is an instance of what has been called the “Not X, but Y” variant of the “negative parallelism" that AI writing is prone to. I may have missed it because I use that trick a lot myself. So I didn’t think: hey, it’s not “or,” it’s both.

Poetry bots mimic by recombining. It’s that simple.

Why was the essay—the entire cohort of essays—taken down from the Alibaba website? Perhaps because the AI was too obvious. They were riddled with fraudulent references. I prefer to think it’s because the ‘balance’ struck in those essays was more critical of AI’s limits and risks than could be tolerated by a company that produces AI products.

Given how quickly new online text is being absorbed into AI training data, the relative imbalance in the essays might actually be an effect of the recent surge in anti-AI opinions appearing online. What’s more—my paranoia has evolved into hopeful thinking here—it’s possible that those who worked with AI to generate the essays, the folks who did the prompting and steering and editing of AI’s output, were themselves in sympathy with the critics.

I’ll end with a few items from our lost essay’s concluding checklist of “What Readers and Writers Can Do”:

Support human poets: Subscribe to independent poetry presses, attend local readings, buy chapbooks directly from authors—especially those whose work resists algorithmic predictability.

Buy directly from the authors? Not exactly ‘on message’ from the point-of-view of an online retail mega-corporation. Nor would a corporation with its own generative AI appreciate this call for full disclosure and fair dealing:

Check the source: Who built the bot? Is there transparency about training data? Are marginalized voices credited—or merely mined?

I wonder if anybody got fired.